Tiered Link Building With GSA SER

The Core Concept of Tiered Link Building

Every modern SEO campaign wrestles with a central contradiction: the links that carry the most weight are the hardest to acquire, while the links that are easy to get often pass negligible value or carry unacceptable risk. Tiered link building exists to solve this. It is a structured architecture where you direct your strongest, most editorially earned backlinks (Tier 1) straight to your money site, and then you build a supporting layer of links (Tier 2) that points at those Tier 1 assets rather than directly at your domain. A third layer (Tier 3) can push power into Tier 2, and so on. This creates a buffer. It insulates your main site from the footprint and variable quality of automated link sources while still funnelling link equity upward. Combined with the brute force of automation, specifically through tiered link building with GSA SER, the model becomes a scalable engine for ranking without leaving a direct paper trail of risky links pointed at your homepage.

Why GSA SER Defines the Modern Tiered Engine

GSA Search Engine Ranker is not a typical backlink tool that holds your hand through guest post outreach. It is an industrial-strength link builder that submits your content to an enormous variety of platforms — article directories, social bookmarks, blog comments, wiki pages, Web 2.0 properties, and more. Its real power emerges when you decouple it from your money site and use it exclusively to construct the lower tiers. By applying tiered link building with GSA SER, you weaponize volume and diversity where they are most needed: at Tiers 2 and 3, where raw link count still influences indexing and crawling, and where a diverse referring domain profile mimics organic growth.

Designing the Three-Tier Scaffold

Tier 1 – The Curated Handshake

Your first line of support consists of high-quality, contextually relevant assets. These are pages you control on authoritative platforms: medium-authority guest posts, niche edits on aged articles, manually crafted Web 2.0 blogs with genuine design and real articles, or even your own satellite sites on expired domains with solid backlink profiles. The core rule is that every Tier 1 link receives the same scrutiny you would apply to a direct money-site link, but because these assets are not your main brand, they can afford to accept a slightly more aggressive powering-up later. This is the receiving dock for all the horsepower generated by your automated tiers.

Tier 2 – The Automated Amplifier

Here is where tiered link building with GSA SER becomes a game-changer. Your Tier 2 goal is not to pass trust; it is to deliver link juice, deep indexation signals, and contextual relevance to your Tier 1 buffer pages. You point GSA SER at each of your Tier 1 URLs using a carefully filtered campaign. The key lies in dialling down the tool’s aggressive defaults. You restrict platforms to those that still offer do-follow juice and reasonable durability: high-quality article directories, social networks, Web 2.0 platforms that accept spun-but-readable content, and niche-relevant forum profiles. Every article you submit through this tier uses unique, heavily spun content that includes Tier 1 anchor text variations. Because GSA SER can build thousands of these links, you generate a powerful, sustained swell of authority that pushes your Tier 1 assets upward.

Tier 3 – The Indexation Firehose

Even the best Tier 2 links will die silently if search engines never crawl them. Tier 3 exists purely to force discovery. This layer is a tidal wave of low-level links — blog comments, trackbacks, image comments, cheap social bookmarks, and wiki links — all pointed at your Tier 2 URLs. Running tiered link building with GSA SER for Tier 3 means you can use minimal content, maximum speed, and a far more liberal platform selection, because the quality of this tier does not directly touch anything your visitors will ever see. The goal is ping-powered indexing. You blast these links with pinging services and indexers so that bots trip over your Tier 2 assets constantly, ensuring the equity you built there actually flows upward.

The Content Velocity Strategy

Link builders often fail because they treat automated campaigns as a set-it-and-forget-it pump. A sustainable tiered link building with GSA SER setup thrives on drip-feeding. You configure the tool to build a pre-determined number of links per day, per project, with random delays. For Tier 2, a steady 50-100 links daily across multiple campaigns can run for months without setting off algorithmic filters. For Tier 3, you can push higher numbers but rotate the target Tier 2 URLs to avoid any single page gaining one million links overnight. The magic lies in the relentless, organic-looking crawl budget occupation. Google’s bot sees a vibrant, slowly expanding constellation of links around your Tier 1 assets and interprets it as genuine persistent interest.

Anchor Text Sculpting Across Tiers

The anchor text distribution in tiered link building with GSA SER must follow a deliberate gravity model. At Tier 1, you use precision: 5-10% exact match anchors, a heavy dose of branded and naked URLs, and generous generic variations. At Tier here 2, you invert the pyramid partially, allowing more phrase-match and LSI anchors pointing at Tier 1, because you are passing relevance signals through a buffer. By the time you reach Tier 3, anchors can degrade into anything — generic “click here,†“read more,†domain URLs, and even nonsense — because the sole function is to create a path for crawlers. GSA SER’s ability to auto-generate anchors from a keyword list and spin them across millions of submissions makes this manageable, but only if you set hard limits on the percentage of money-keyword anchors allowed per campaign.

Mitigating the Footprint Crisis

The most frequent objection to tiered link building with GSA SER is the risk of a detectable footprint. The tool leaves traces if you let it: identical email addresses, the same spun article skeleton across 10,000 pages, the same set of usernames, and predictable submission times. Neutralize this by investing in a pool of randomized private proxies assigned per campaign, using multiple catch-all email domains, and feeding the content spintax through multiple levels of synonymization and sentence restructuring before it ever touches the submitter. Crucially, never place GSA SER on the same machine or IP range as your money site’s analytics or Search Console access, and never use any link indexing service that requires authentication through your Google account. The tiered model itself is the ultimate footprint obfuscation: even if an algorithm devalues your Tier 2, your Tier 1 remains a hand-curated collection that can survive on its own merit.

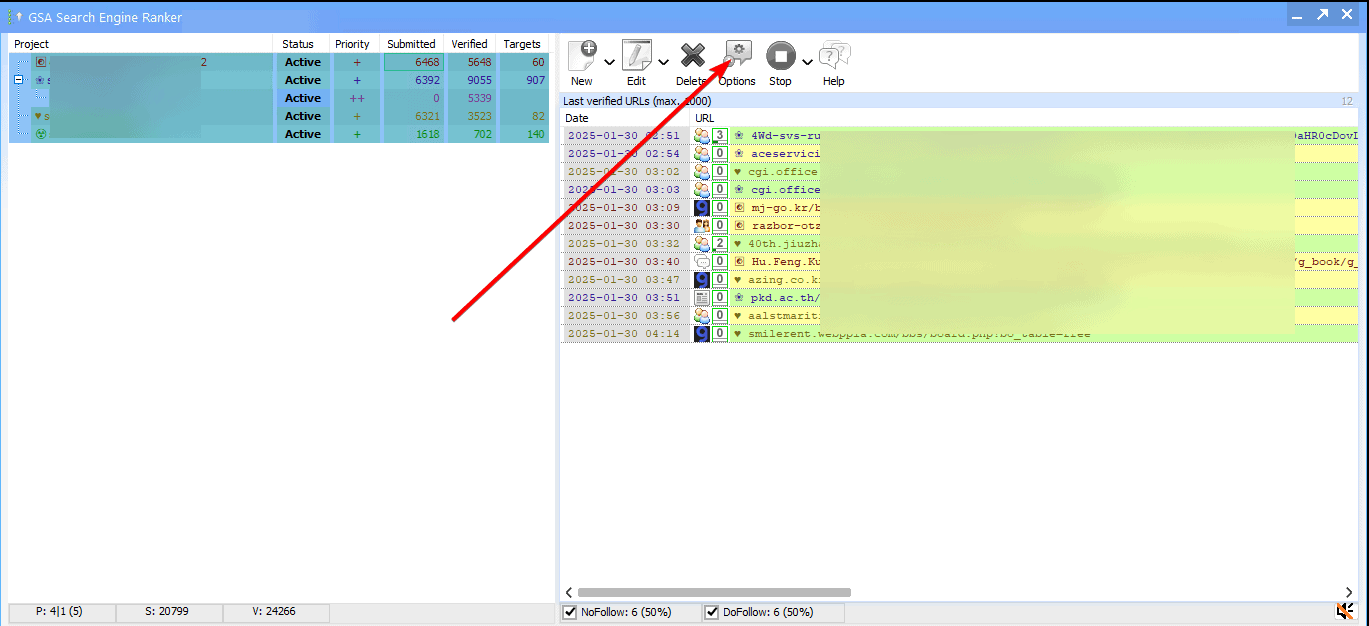

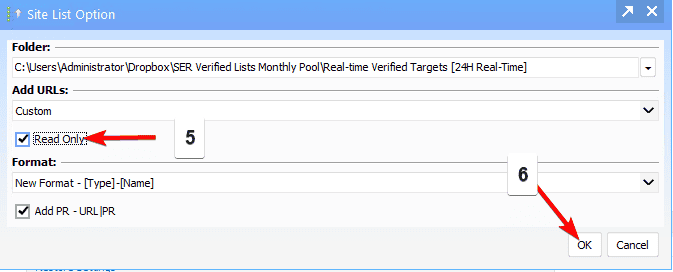

Verification Loops and Tier Pruning

Automation without monitoring is digital pollution. Every thirty days, crawl your Tier 1 links with a batch link checker. Identify which assets have lost referring domains or dropped in page authority. Adjust your GSA SER campaigns to redirect Tier 2 focus toward the strongest surviving Tier 1 properties and away from dead ones. In tiered link building with GSA SER, you often discover that a handful of Tier 1 assets absorb 80% of the total value; you then double down on powering those few. This creates a self-reinforcing loop: the rich Tier 1 URLs get richer, their outbound juice to your money site concentrates, and you stop wasting automated resources on properties Google no longer trusts.

From Volume to Relevance in a Post-Spam World

The landscape has shifted. Ten years ago, you could pummel a page with random blog comments and watch it rise. Today, relevance filtering is aggressive, and link context matters enormously. Sophisticated marketers now use tiered link building with GSA SER to inject contextual scaffolding around their Tier 1 assets, not just to blast random links. They scrape target keywords, pull semantically related entities, and build Tier 2 articles that genuinely discuss subtopics surrounding their Tier 1 content. GSA SER’s article manager, fed with topic-specific spintax, can produce hundreds of variations of an article that includes phrases a topical authority would use. When those articles link to a Tier 1 buffer page about the same subject cluster, the search engine sees a coherent, topically relevant support network rather than a spam plume. This approach turns the old volume-only strategy into a relevance-at-scale engine.